- Keeping data doppelgangers in synch

All databases face consistency considerations as data undergoes constant revision, and these considerations are most complex for those using distributed storage systems. In a general sense, consistency here has to do with the integrity and accuracy of the database. It means that data reading, writing, and updating operations must produce reliable, predictable results – both under normal conditions and when transaction or system failures occur.

However, when discussing database consistency on a more detailed level, the term “consistency” must be used with care. It can refer to a number of different concepts which may occasionally overlap, but are nevertheless distinct. All databases must meet established transaction consistency requirements, but using a distributed storage system raises the additional issue of managing synchronization between each replica of the data. This can be termed “multi-replica consistency”.

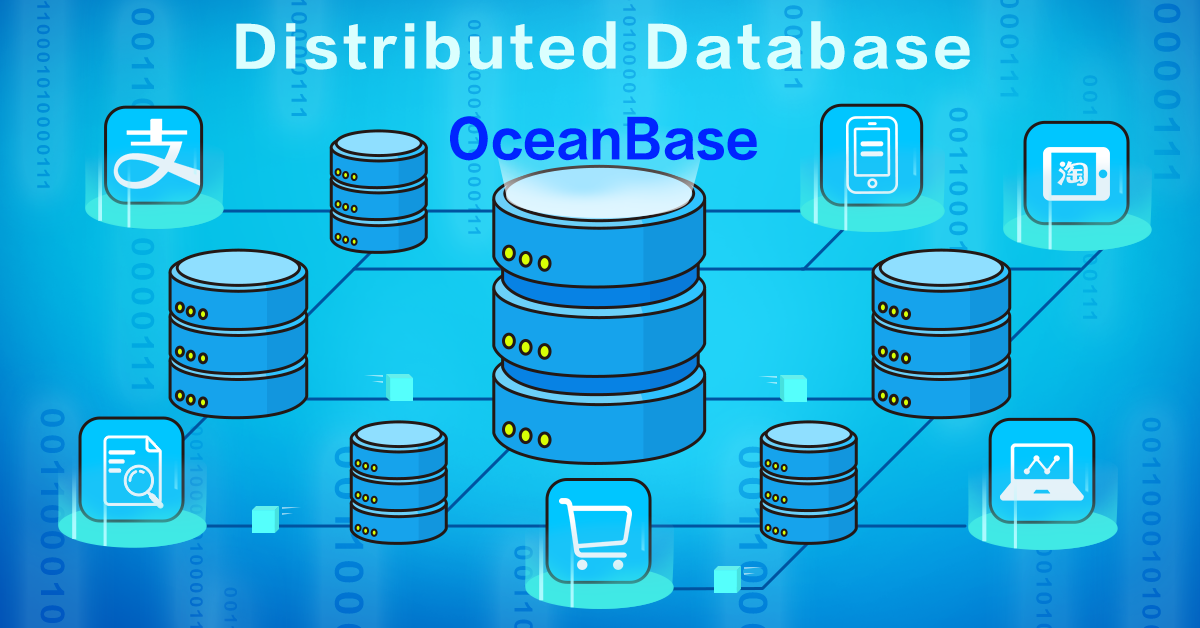

How the consistency model of a given database implements these different concepts of consistency also depends on whether the database is relational or non-relational. Several consistency models exist for NoSQL distributed storage databases, including CosmosDB and Cassandra, but developing a consistency model that can accommodate the more complex transactions of relational databases is a bigger challenge. It is one that the Alibaba tech team dealt with when developing OceanBase, Alibaba’s own consistency model for distributed relational databases.

Before looking at the consistency solutions provided by CosmosDB, Cassandra and OceanBase in detail, it will help to define what is meant here by a distributed storage system and what meanings of consistency are relevant in this context.

Defining distributed storage system

The simplest storage system imaginable would have a single client (process) and a single server (process service). The client initiates read and write operations sequentially, and the server processes each in sequence. From the perspective of both the server and the client, each operation can be seen as a result of the previous operation. Additionally, the service process manages and stores the data in full.

A typical distributed storage system in the real world elaborates on this basic system in three ways:

- It is multi-client

- It is multi-service

- Data is partitioned and stored in shards on different servers

Multi-client

When multiple clients operate concurrently, their operations affect each other. For example, a client must be able to read data that is written by another client. A typical stand-alone concurrent program is an example of a single-service, multi-client model, consisting of a single program in which multiple threads share memory.

Multi-service

This means that multiple service processes on different machines each store a replica of the same data at the same time. Each client read and write operation can theoretically be issued to any one of the services where the replicas are located.

In this case, a synchronization mechanism must be used to ensure that the various data replicas are identical. Otherwise, there may be cases where previously written data cannot be read, or previously read data cannot be read later. This is what is referred to by “multi-replica consistency”.

Having multiple service processes can be desirable for a number of reasons, including making the back-end storage system more robust.

Both multi-client and multi-service

In a multi-client, multi-service system, different clients may wish to simultaneously perform read and write operations on different replicas of the same data. How this is managed by the system then depends on the nature of the application.

For example, if client A performs a write operation on a given row and client B does not need to read the latest data immediately, it may be sufficient to allow client B to perform a read operation on a different data replica in the meantime. Otherwise, client B may have to wait until the write operation and data synchronization is complete.

Data partitioning

In systems like OceanBase, a stand-alone machine cannot always accommodate a replica of the data in its entirety. Therefore, database tables and partitions of the tables are distributed on multiple machines. Each service process is responsible for a specific replica of certain table partitions.

Naturally, this complicates read and write semantics. For example, if a write operation modifies two different data items on two different partitions of two different service processes, and a subsequent read operation reads these two data items, is the read operation allowed to read the modification of one data item, but not that of the other?

Summary

In summary, the distributed storage system model under discussion here has the following features:

- Data is divided into multiple shards stored on multiple service nodes.

- Each shard has multiple replicas stored on different service nodes.

- Multiple clients concurrently access the system and perform read and write operations, and each read and write operation takes an unequal amount of time in the system.

- Read and write operations are atomic, i.e. performed in full or not at all (except where noted below).

Defining Consistency

The concept of multi-replica consistency in a distributed storage system has already been touched on above. Let us revisit it here in more detail before seeing how it differs from consistency in the context of database transactions.

Multi-replica consistency in a client-centric consistency model

In a multi-replica storage system, the data synchronization protocol must guarantee the following to ensure consistency:

- All replicas of the same data can receive all write operations (no matter how long it takes).

- Each replica will perform write operations in the same designated order.

In a client-centric consistency model, the synchronization protocol must satisfy four conditions:

- Monotonic read

If a client reads version n of some data, the version it reads next must have a version number greater than or equal to n.

- Read-your-writes

If a client writes version n of some data, the version it reads next must have a version number greater than or equal to n.

- Monotonic write

Two different write operations from a same client must be performed in the same order on all replicas of the data, which is the order in which they arrived at the storage system. This prevents write operations from being lost.

- Writes-follows-reads

After a client reads version n of some data, the next write operation must be performed on a replica with a version number greater than or equal to n.

ACID principles of database transactions

ACID, which stands for “atomicity”, “consistency”, “isolation”, and “durability”, is a well-known set of four principles designed to collectively ensure the validity of database transactions. Of the four, “consistency” and “isolation” are liable to cause confusion with the concept of multi-replica consistency – the former due to its name and the latter due to its meaning.

ACID consistency

Consistency as part of the ACID model simply means that data read and write operations for a transaction must meets certain global consistency constraints, which could include uniqueness constraints, foreign key constraints. It pertains only to the transaction itself, and is not concerned with how many replicas of the data exist.

ACID isolation

Isolation has to do with limiting the order in which certain kinds of concurrent operations are performed. Although a similar concept exists with data synchronization between multiple replicas – for example, monotonic write – isolation in ACID is concerned with limiting the extent to which concurrently executed transactions can see each other, and not whether the data exists in single or multiple replicas.

Although under the ACID model database transactions must be atomic, the intermediate state of an atomic transaction may need to be observed by other concurrent transactions in a real-world system. As mentioned earlier, when discussing data synchronization it is assumed that the read and write operations within a transaction are atomic.

Comparing NoSQL consistency models with Alibaba’s OceanBase

Consistency models do not simply implement consistency in its purest sense, but can provide different levels of consistency between data replicas depending on user requirements.

This is partly due to cost-benefit considerations – stricter consistency levels consume more resources, meaning higher latency, lower availability, and reduced scalability. Less strict consistency levels can be more efficient and less resource-intensive by allowing reading of stale data. In Internet services that use databases, business components are sometimes permitted to read slightly stale data.

For users, however, the stricter the consistency level is, the better. A strict consistency level can also greatly simplify application complexity in certain uniquely complex scenarios.

Let us look at CosmosDB, Cassandra, and OceanBase in terms of the different consistency levels they provide.

Cosmos DB

Azure’s Cosmos DB offers five levels of consistency, aimed at providing low read/write latency, high availability, and better read scalability from front-end to back-end. They are listed below in order of strictest to least strict.

1. Strong consistency

- Guarantees that read operations always read the latest version of data.

- Requires write operations to be synchronized to a majority replica before they can be successfully submitted.

- Requires read operations to receive a reply from a majority replica before being returned to the client.

- Prohibits read operations from seeing the results of write operations that are unsubmitted or partial, and ensures that they can always read the result of the most recent write operation.

- Guarantees global (inter-session) monotonic read, read-your-writes, monotonic write, and writes-follows-reads.

- Offers highest read latency of all levels.

2. Bounded staleness consistency

- Guarantees the most read version and the latest version of data have a gap of K versions.

- Maintains a sliding window to guarantee the global order of operations outside of the window.

- Guarantees monotonic read in one area.

3. Session consistency

- Guarantees monotonic read, monotonic write, and read-your-writes within a session, but not between sessions.

- Maintains the version information of read and write operations in client sessions, passing between multiple replicas.

- Offers low read and write latency for session consistency.

4. Consistent Prefixes

- Guarantee eventual consistency in situations where there are no more write operations.

- Guarantee that read operations do not see out-of-order write operations. For example, if write operations are performed in the order ‘A, B, C,’ a client may see ‘A,’ ‘A, B’, or ʻA, B, C,’ but not ‘A, C’, or ‘B, A, C’.

- Guarantee monotonic read within each session.

5. Eventual consistency

- Guarantees eventual consistency.

- Offers weak consistency with comparatively high levels of stale data.

- Offers the lowest read/write latency and highest availability of all levels because it can choose to read any replica.

According to Azure, 73% of CosmosDB users use the session consistency level and 20% use the bounded staleness consistency level.

Cassandra

Like CosmosDB, Cassandra also uses a majority replica protocol. It offers different consistency levels by controlling the number and locations of replicas accessed by read and write operations. The combination of read/write settings used will ultimately correspond to one of two system consistency levels, i.e. strong or weak.

Write operation consistency

Write operation consistency configurations define which replicas that write operations must successfully write/synchronize to before the operation is returned to the client. The configurations are as follows:

- ALL

This is the strongest consistency level. Write operations must be synchronized to all replicas and applied to the memory. However, a single point of failure will cause a write failure and leave the system unavailable.

- EACH_QUORUM

Write operations must be synchronized to the majority replica node in each data center. In clusters deployed in multiple data centers, QUORUM consistency guarantees can be provided in each data center.

- QUORUM

Write operations must be synchronized to the majority replica node. When a few replicas are down, write operations can continue to be performed.

- LOCAL_QUORUM

Write operations must be synchronized to the majority replica of the data center where the coordinator node is located. This avoids the high latency caused by the cross-data center synchronization when multiple data centers are used. Within a single data center, a small amount of downtime can be tolerated.

- ONE

Write operations must be written to at least one replica.

- TWO

Write operations must be written to at least two replicas.

- THREE

Write operations must be written to at least three replicas.

- LOCAL_ONE

Write operations must be written to at least one replica in the local data center. In clusters deployed in multiple data centers, the same disaster tolerance effect as ONE can be achieved, and write operations are limited to the local data center.

Read operation consistency

The following consistency configurations exist for read operations:

- ALL

Read operations are returned to the client after all replica nodes reply. If a single-point stand-alone machine fails, write operations fail and the whole system becomes unavailable.

- QUORUM

Read operations are returned to the client after the majority replica returns a reply.

- LOCAL_QUORUM

Read operations are returned to the client after the majority replica in the data center returns a reply. This avoids high latency of cross-data center access.

- ONE

Read operations are returned to the client after the most recent replica node replies. Stale data may be returned.

- TWO

Read operations are returned to the client after the most recent two replica nodes reply.

- THREE

Read operations are returned to the client after the most recent three replica nodes reply.

- LOCAL_ONE

Read operations are returned to the client after the most recent replica node in the data center replies.

System consistency levels

Regardless of multi-data center factors, any combination of read/write settings used will correspond to either a strong or weak system consistency level:

- Strong consistency

The sum of the number of write and read replicas is greater than the total number of replicas. This guarantees that a read operation will always be able to read the most recently written data.

- Weak consistency

The sum of the number of write and read replicas is less than the total number of replicas. This means that read operations may be unable to read the latest data, and may even read data older than the previous read operation. In essence, this is eventual consistency.

OceanBase

NoSQL databases like CosmosDB and Cassandra provide atomic guarantees only for single-row operations. However, the basic operation of a relational database like OceanBase is an SQL statement, which is inherently a multi-row operation supporting multi-statement transactions and rollbacks.

In relational databases, atomicity must be guaranteed at both the SQL statement level and the transaction level, making consistency solutions more complex and costly to produce. Fortunately, OceanBase overcomes these obstacles and provides a model with configurable consistency levels and a synchronization mechanism that minimizes recovery time after system failures. This mechanism builds on the majority replica model with the addition of the primary replica.

OceanBase’s synchronization mechanism

OceanBase uses the Multi-Paxos consensus algorithm to synchronize redo logs between multiple data replicas. Each transaction is considered successful after the majority replica returns a reply.

Independent voting by the multiple replicas is used to select the primary replica. The primary replica is then responsible for the execution of all the data manipulation statements. Major transactions require that log submissions include the primary replica itself.

When the primary replica goes down, the remaining majority replicas select a new primary replica. At that point, each completed transaction must have at least one copy of the submitted logs. The new primary replica initiates a system recovery by communicating with other replicas to obtain logs of all submitted transactions.

This mechanism means that in the event of a minority downtime, OceanBase can guarantee no data is lost, and can achieve a recovery time of under one minute for strong consistency read and write services.

Consistency levels

Strong consistency levels

In OceanBase, if the execution of a statement involves multiple table partitions, the primary replicas of these partitions may be assigned to different service nodes. A strict database isolation level requires that read requests involving multiple partitions only see a “snapshot,” that is, they do not see any partial transactions. Snapshots are provided by maintaining certain form of global read version numbers, but this comes at a high cost.

If the application allows (as is often the case), the read consistency level can be adjusted so that the system is guaranteed to be able to read the latest written data, but not in snapshot form. This meets the criteria for a strong multi-replica consistency level; however, it is technically a departure from ACID principles.

Weak consistency levels

OceanBase provides two weak levels of consistency – equivalent to eventual consistency and prefix consistency – and configurable bounded staleness consistency at both levels.

- Eventual consistency

This is the weakest level, where all replicas can provide read services. When any replica goes down, the client can promptly retry the read service on another replica, even when the majority replica is down.

The synchronization protocol guarantees the successful storage of logs, but does not require the log to be replayed on the majority replica. This means that the data version of each replica to complete playback can be different even for multiple copies of the same partition, meaning read operations may read staler data than during a previous read.

- Consistent Prefixes

Consistent Prefixes are provided because most applications using relational databases cannot tolerate out-of-order read operations. In this mode, read version numbers are recorded within the database connection. Monotonic read is guaranteed in every database connection.

This mode is generally used for accessing the read database in the OceanBase cluster. The service itself is a read/write-separated architecture.

- Bounded staleness consistency

At both weak levels, OceanBase can provide bounded staleness consistency while guaranteeing session-level monotonic read.

When OceanBase is deployed in multiple locations, there is an inherent latency between cross-region replicas. Setting latency tolerance for read operations allows for configurable bounded staleness. For example, if tolerance is set to 30 s, the read operation reads the local replica if its latency is under 30 seconds. If its latency is more than 30 s, the read operation seeks to read other replicas in the region where the primary replica is located. If no replicas satisfy latency requirements, the primary replica is read.

This approach ensures minimal read latency and better availability than strong consistency reads.

In summary, OceanBase provides strong, bounded staleness, prefix, and eventual consistency levels and can guarantee the complete ACID transaction semantics of relational databases.

. . .

Alibaba Tech

First hand, detailed, and in-depth information about Alibaba’s latest technology → Search “Alibaba Tech” on Facebook